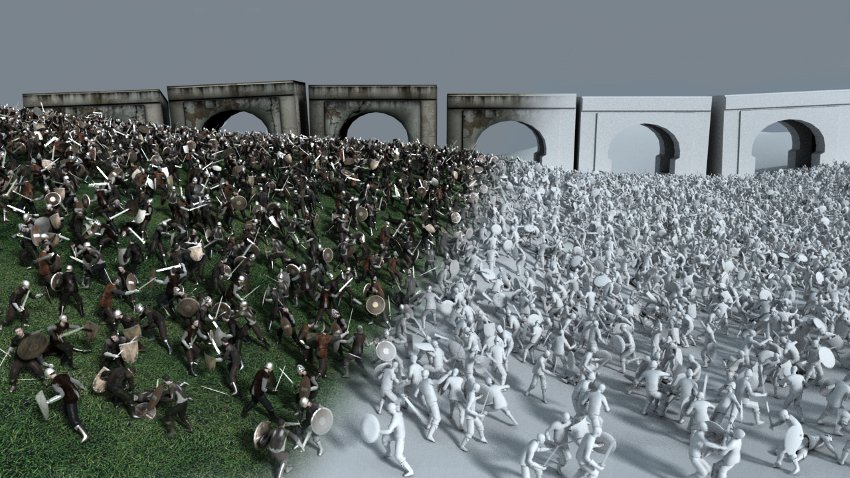

It’s been more than twenty years since Peter Jackson’s Lord of the Rings trilogy used the newly made Multiple Agent Simulation System in Virtual Environment (MASSIVE) software to create and animated armies of orcs and elves. MASSIVE famously took much of the misery out of big battle scenes, generating thousands of sprites that would battle among themselves. Well, they were supposed to. Early prototypes had trouble getting the sprites to fight each other – they had to literally make them more stupid and foolhardy before they’d get into it.

Regardless, MASSIVE had plenty of other obvious uses, and as processing power ramped up, would be purloined by Mamoru Hosoda to generate schools of self-aware fish in Mirai. Soon after, Yuhei Sakuragi would use similar “deep learning” algorithms to get the crowd scenes in his Relative Worlds to effectively animate themselves.

Such applications were just the tip of the iceberg. As demonstrated recently by Dwango’s Yuichi Yagi, now we have A.I. software packages that can be trusted to generate the in-between animation that goes between key frames, putting a big chunk of the animation business out of work. A.I. software like DALL·E 2 can now take a photograph and turn it into a 3D environment, or take a portrait and make it come to life. It can even guess what might be off-screen or out of frame, like predictive text, but for images. When faced with such leaps in abilities, it’s not hard to see that the next generation of animation labourers could be reduced to “human fallback” – the supervisor minions who pop their heads in every now and then to click an approval or reject a bodged model, based on a Stable Diffusion scraping of Every Anime Ever Made.

But how long will we have to wait before A.I. worms its way into other areas? Surely there’s already enough content to process, and expectations low enough in certain genres, for an A.I script writer to plot out an entire anime show? Feed a hundred light novels into a hopper, and see if the Plototron 3000 comes up with a world-beating idea for… I don’t know, a teenager in another world with a sentient smartphone.

I’m not one of the doomsayers, yet. Yuhei Sakuragi estimated that human fallback was required on almost half the working hours of his deep-learning scenes. “The conclusion was that you should probably aim for 50 or 60 per cent of completion, then shape it with human hands afterwards,” he said. Computer animation itself was once decried as a poison that would destroy anime… instead it made it anew, and gave us unexpected talents like Makoto Shinkai.

Jonathan Clements is the author of Anime: A History. This article first appeared in NEO #227, 2023. A month after this article first appeared Rootport’s Cyberpunk Peach John was hailed as the “first manga drawn by an A.I.”